Weekly Briefing: EU AI Act Amendments, OpenAI’s Canadian Privacy Investigation, Geofencing Warrants, AI in Law Enforcement, Greece’s Constitutional AI Proposal

Newsletter Edition 95: Reforms to the EU AI Act, Canada’s privacy findings against OpenAI, the constitutional battle over geofence warrants, and Greece to embed AI oversight into its constitution.

Technology law is entering a more confrontational phase. Governments are no longer treating AI as a neutral productivity tool but as a system capable of influencing constitutional rights, criminal investigations, healthcare, public trust, and democratic authority itself. This edition examines the EU’s decision to delay parts of the AI Act while expanding prohibitions, Canada’s findings against OpenAI’s data practices, the constitutional implications of geofence warrants before the U.S. Supreme Court, and the growing normalisation of AI within policing and surveillance institutions. Greece’s proposal to constitutionalise AI oversight and the UAE’s expansion into AI-driven legal services.

Newsletter Edition 95

🔥 This edition includes many important technology law news and updates from the EU, U.S., Canada, U.A.E, Greece and other jurisdictions. Also, keep reading to view the latest opportunities, including free courses, events, scholarships, and a list of remote tech lawyer jobs.

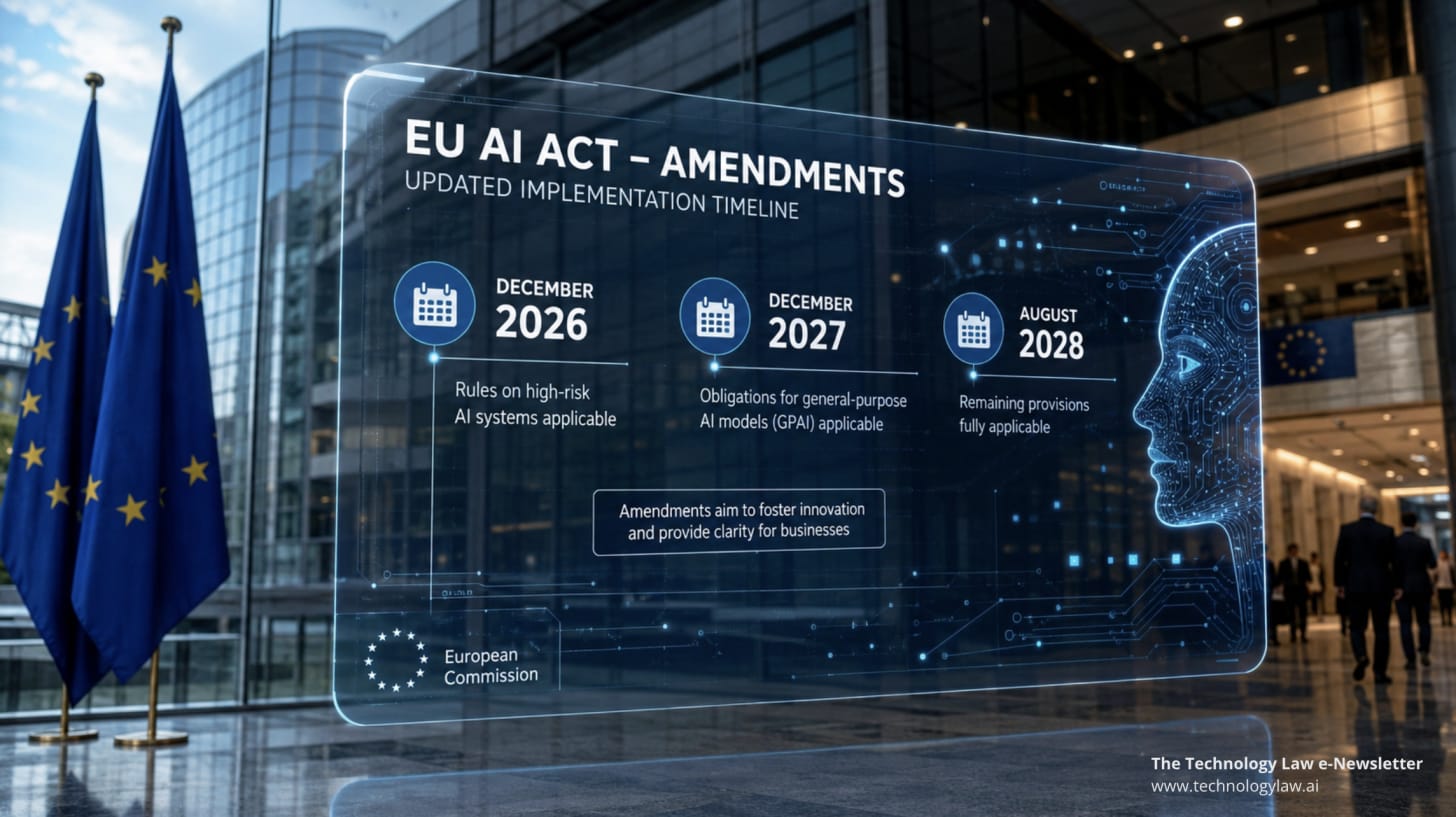

1. EU institutions have reached a provisional agreement to amend the EU AI Act: Key dates for high‑risk AI obligations move to December 2027 and August 2028, with generative AI watermarking pushed to December 2026. A ban on AI systems producing non‑consensual intimate imagery and child sexual abuse content is also introduced.

2. U.S. Department of War releases UFO/UAP files: The U.S. Department of War declassified around 160 UFO files, including photos and transcripts from Apollo missions, following a directive from President Trump.

3. Joint Canadian investigation into OpenAI: Canada’s federal and provincial privacy authorities released a joint report finding that OpenAI’s initial training of ChatGPT violated privacy laws due to over‑collection, lack of consent and inaccuracies.

4. Case of the week [Chatrie v. United States]: The U.S. Supreme Court will decide whether geofence warrants, orders compelling companies like Google to provide location data of all devices within a defined area, violate the Fourth Amendment’s protections against unreasonable searches.

Lead Story

EU leaders agree to amend the EU AI Act: Deal reached on the AI Omnibus Regulation

On 7 May 2026, the Council of the European Union and the European Parliament announced a provisional political agreement to amend the EU AI Act.

The amendments, negotiated as part of a digital omnibus package, do not alter the AI Act's underlying structure but adjust the timelines for certain obligations and tighten prohibitions on specific AI practices.

Key elements of the provisional agreement

Deferred application of high‑risk obligations. Under the current AI Act, high‑risk obligations for use cases listed in Annex III, such as biometric identification, critical infrastructure, education, employment, law enforcement and border management, would apply from 2 August 2026. The provisional agreement pushes these obligations to 2 December 2027, giving additional time for implementation. AI components that are safety parts of sectoral products (Annex I) will now be enforced from 2 August 2028, rather than 2 August 2027. These dates are fixed regardless of the availability of harmonised standards.

Watermarking and generative AI output detection. A new deadline of 2 December 2026 will apply to generative AI providers obligated to detect and watermark AI‑generated content. This postponement aims to align with the development of technical tools and standards while ensuring that generative systems remain traceable.

Prohibition of “nudifier” apps and AI‑generated child sexual abuse material (CSAM). The agreement explicitly bans AI systems that generate non‑consensual intimate imagery or CSAM. Providers must comply with this prohibition by 2 December 2026. The ban addresses concerns over tools that digitally remove clothing or synthesise exploitative images without consent.

Registration and transparency requirements. Providers of “exempted” AI systems — systems originally classified as high‑risk but deemed low risk through derogations — must continue to register their models in the EU’s public database, albeit with simplified information requirements. Meanwhile, high‑risk providers still need to conduct conformity assessments, maintain documentation and provide risk management systems.

Bias detection using sensitive data. The amendment broadens the scope for using sensitive personal data (for example, data revealing racial or ethnic origin) to detect and correct biases in AI systems, but processing must be strictly necessary and limited to addressing specific bias types. This reflects the EU’s recognition that accurate bias detection sometimes requires access to sensitive attributes.

Other clarifications. The omnibus removes overlapping obligations for AI embedded in machinery products, narrows the concept of “safety components” and centralises enforcement of certain general-purpose AI systems under the EU AI Office. It also reduces the volume of information required for registration and tasks the Commission with issuing guidance on classification.

What are the implications of these changes?

The provisional agreement preserves the EU’s risk‑based framework while giving organisations more time to prepare for high‑risk obligations and generative AI watermarking.

The explicit ban on “nudifier” tools and AI‑generated CSAM shows regulators taking a firm stance on preventing abuse.

The deferral should not be seen as a reprieve; organisations developing or deploying AI must continue building compliance programmes and documenting risk management.

Once finalised and formally adopted, these amendments will mark the first major adjustment to the world’s most comprehensive AI regulation.

Important Technology Law Updates

1. Department of War releases UFO and UAP files: what does space law say

On 8 May 2026, the U.S. Department of War (DoW) published more than 160 previously classified files related to unidentified anomalous phenomena (UAP) and unidentified flying objects (UFOs).

The release follows President Donald Trump’s directive to increase transparency about UAPs and directs the DoW, in collaboration with the Office of the Director of National Intelligence, to declassify unresolved cases.

The files include photographs, videos and transcripts of Apollo missions. Notably, they capture, among other things, an alleged 1947 report of “flying discs” and transcripts from Apollo 12 and 17, where astronauts observed bright particles and unusual phenomena.

The DoW emphasised that these cases remain unresolved because they lack sufficient data for definitive conclusions, and it invited private‑sector researchers to examine the materials.

Although some commentators and enthusiasts hoped that the release would confirm extraterrestrial life, the files provide no conclusive evidence of alien technology.

Critics have suggested the release may deflect attention from terrestrial military spending or other policy issues.

Nonetheless, the disclosure presents a useful moment to revisit international space and technology law.

Space law context

The Outer Space Treaty of 1967, the foundation of international space law, outlines several principles relevant to UAP disclosures. It stipulates that the exploration and use of outer space must benefit all countries; outer space and celestial bodies cannot be appropriated by states; and activities must be conducted for peaceful purposes.

States are responsible for national space activities and liable for damage caused by their space objects. The treaty also emphasises the need to avoid harmful contamination and to recognise astronauts as envoys of mankind.

If future evidence shows that UAPs originate from human technology (for example, secret military spacecraft), questions could arise about compliance with the treaty’s prohibition of weapons of mass destruction in orbit and the requirement that celestial bodies be used exclusively for peaceful purposes.

Conversely, if extraterrestrial origin were ever substantiated, states would need to consider how the “province of all mankind” principle applies to contact with non‑state entities.

The release, therefore, encourages jurists to think beyond speculation and to consider whether existing frameworks adequately regulate unknown phenomena.

Transparency and governance

The DoW’s release also highlights how governments balance national security with public interest. The files were previously withheld due to classification. Transparency advocates argue that withholding information can fuel conspiracy theories and undermine trust.

Declassifying unresolved phenomena carries its own risks: adversaries could infer U.S. surveillance capabilities, or citizens could misinterpret ambiguous data. Future disclosures may require international coordination to ensure that released information aligns with obligations under space treaties and fosters collaborative research rather than unilateral narratives.

2. Greece proposes a constitutional amendment on AI

Greece, historically known as the birthplace of democracy, has proposed a constitutional amendment that would explicitly mention artificial intelligence.

Prime Minister Kyriakos Mitsotakis explained that the amendment aims to ensure that AI serves human freedom and social prosperity, while mitigating risks. He emphasised that AI should not undermine individual rights, democratic processes or equal access to public services.

Why a constitutional amendment?

For years, governments, including the EU, have approached AI regulation through the lens of consumer protection, including mandating transparency disclosures, creating risk categories and imposing fines for non‑compliance.

Greece’s proposal goes further by positioning AI as a matter of constitutional order. This reflects an emerging consensus that AI is not just a consumer product but a public governance tool.

AI systems influence elections, policing, welfare administration, tax systems and border control. When AI begins to impact state power, fundamental constitutional principles (dignity, proportionality, equality and due process) become relevant.

Constitutions are designed to restrain power and protect individuals; in the digital age, this restraint must cover both government use and private platforms that operate as gatekeepers of information.

Details of the proposal

Although the exact text of the amendment has not been finalised, it will likely include a clause stating that AI must respect human dignity and fundamental rights and that Parliament may regulate AI to protect democratic order.

Greece is already experimenting with AI in border surveillance, tax administration and other public services.

Lawmakers hope that embedding AI principles into the constitution will provide a foundation for future legislation and judicial interpretation.

Global implications

If adopted, Greece’s constitution would be among the first to explicitly address AI. It could influence discussions within the EU and beyond.

For example, the EU’s AI Act regulates AI through ordinary legislation; a constitutional clause adds an additional layer of protection that cannot be easily amended.

3. Joint investigation into OpenAI’s data processing practices

Canada’s privacy regulators have been at the forefront of scrutinising large AI models. On 25 April 2026, the Office of the Privacy Commissioner of Canada (OPC), along with the privacy commissioners of Québec, British Columbia and Alberta, released a joint report on their investigation into OpenAI’s data practices.

The investigation began with a complaint regarding ChatGPT’s handling of personal information during training and in user interactions.

Key findings

OPC concluded that OpenAI’s initial training of ChatGPT contravened Canadian privacy law. OpenAI collected and used personal information from publicly accessible websites without valid consent and did not provide sufficient transparency about its practices.

According to the report, OpenAI lacked a legal basis under federal and provincial laws for processing personal data. The investigation also criticised OpenAI for failing to adequately address accuracy and accountability issues, such as providing users with incorrect or biased information.

OpenAI cooperated with the investigation and implemented several measures throughout the investigation. These include limiting the use of personal information in training, removing sensitive data from datasets, publishing a blog post about privacy practices, retiring older models such as GPT‑3.5 and 4, and improving procedures for individuals to challenge inaccurate outputs.

OpenAI also committed to implementing additional measures in the coming months, such as better consent mechanisms and data retention policies.

The Privacy Commissioner of Canada found the complaint well‑founded but conditionally resolved because the corrective measures addressed the primary concerns.

Québec’s commissioner emphasised that implied consent is inadequate when the collection or use of information is outside individuals’ reasonable expectations, involves sensitive data or creates a meaningful risk of harm. The regulators concluded that modern privacy laws need to catch up with generative AI models and called for legislative reform.

What do AI developers need to do?

Training AI models on public data does not automatically exempt organisations from privacy obligations. Consent must be meaningful, and individuals should have mechanisms to verify, correct or delete their data from training datasets.

Organisations operating in multiple jurisdictions face a patchwork of privacy laws; Canada’s case shows that provincial commissioners can jointly investigate AI developers and impose compliance commitments.

Developers should build privacy into their designs, minimising data collection and ensuring explicit consent where required.

Case of the Week

Litigants: United States v. Chatrie

Citation: 136 F. 4th 100 (4th Cir. 2025), cert. granted, 2026 WL 120676 (U.S. Jan. 16, 2026) (No. 25-112)

Facts and dispute

On 8 July 2019, a bank in Richmond, Virginia, was robbed. As part of their investigation, police obtained a “geofence warrant” compelling Google to provide information on all devices that had been within a 150‑metre radius of the bank around the time of the robbery.

Google responded with anonymised location data for dozens of devices. Investigators then asked Google to expand the time frame and requested more specific data on nine accounts (Step 2), followed by subscriber information for three accounts (Step 3) that matched suspect movements. Police identified Okello Chatrie as one of the device owners and charged him with robbery.

Chatrie moved to suppress the evidence, arguing that the geofence warrant violated the Fourth Amendment. The U.S. District Court for the Eastern District of Virginia agreed that the warrant was overly broad because it gathered data on many innocent individuals and allowed investigators to narrow results without judicial oversight.

However, the court declined to suppress the evidence due to the “good faith” exception.

On appeal, a divided panel of the Fourth Circuit held that Chatrie lacked a reasonable expectation of privacy in his location data because he voluntarily carried a smartphone and had location services enabled. The full Fourth Circuit was evenly split en banc, leaving the panel decision intact.

Legal issues and questions before the Supreme Court

The central question was whether geofence warrants, which compel companies to disclose location data for all devices in a defined area during a specified time, satisfy the Fourth Amendment’s probable cause and particularity requirements.

Traditionally, warrants must specify the person or place to be searched and the items to be seized. In the Chatrie case, the warrant targeted an area and time period rather than a particular suspect. It could be argued that it functioned more like a general warrant by casting a digital dragnet over dozens of individuals. Supporters may counter that geofence warrants are a necessary investigative tool in the digital era and that anonymisation, combined with judicial oversight, minimises intrusions.

It is anticipated that the court may issue a narrow ruling focusing on whether additional judicial oversight is needed during the multi‑step narrowing process rather than banning geofence warrants outright.

The multi‑step process—where investigators request anonymised data, then expand or narrow the time frame, and finally seek subscriber information—may be seen to lack separate warrants or judicial review after the initial authorisation.

The Court may address whether each step constitutes a separate search requiring independent probable cause and whether general warrants are permissible in the digital context.

Decision and potential impacts

At the time of writing, the Supreme Court has not issued its decision. If the Court upholds the warrant, law enforcement agencies will continue to use geofence warrants with minimal changes.

However, if the Court rejects the warrant as unconstitutional, it could limit or eliminate the use of geofence warrants nationwide. This would require law enforcement to develop alternative investigative methods and may lead to new privacy protections for location data.

A narrow decision might require courts to oversee each step of a geofence search, ensuring that investigators cannot unilaterally narrow or expand data sets without additional warrants.

Such a ruling could also impact other general data warrants, such as those for cell‑site location information or IP addresses. Regardless of the outcome, Chatrie will likely shape the future of digital privacy law and clarify how constitutional protections apply to novel surveillance techniques.

Other Developments in Technology Law

1. Ashley MacIsaac sues Google over AI Overview mix‑up

Canadian fiddle virtuoso Ashley MacIsaac filed a defamation lawsuit against Google after the company’s AI Overview product falsely asserted that he had been convicted of sexual crimes.

According to the statement of claim, AI Overview summarised search results by saying MacIsaac had committed sex crimes and was convicted of sexual assault; the information was entirely false and not present in the sources that the AI summarised. MacIsaac learned of the defamatory statement when a concert venue cancelled a performance and later discovered that the AI system repeated the false allegations across various queries.

The lawsuit seeks at least USD 1.5 million in damages. MacIsaac’s lawyers argue that Google should be held liable for the statements generated by its AI because the system functions as a publisher and presents its outputs as factual summaries.

Google responded that AI Overviews are dynamic and automatically generated. Google eventually removed the erroneous summary and said it uses such incidents to improve its models.

The case raises questions about whether AI companies can be liable for defamation when their models produce false statements based on training data.

It also illustrates how AI summarisation tools can amplify inaccuracies and cause reputational harm. Courts will somehow need to consider whether existing defamation law applies to machine‑generated speech and whether AI providers have a duty to pre‑screen outputs for accuracy.

2. UAE’s AI‑powered legal platform Legaline launches

Dubai‑based Legaline, launched on 6 May 2026, touts itself as the first full‑cycle AI‑native legal services platform in the United Arab Emirates. The company developed its own AI stack using in‑house machine‑learning and neural‑network engineering and built a corpus of more than 60 000 searchable legal passages.

Legaline is designed for the complex UAE legal environment, covering federal laws, seven emirate‑level systems, the Dubai International Financial Centre (DIFC), the Abu Dhabi Global Market (ADGM) and more than 40 free zones. Its AI supports research across 33 jurisdictions and offers real‑time translation among English, Russian and Arabic.

The platform combines multiple tools: a public AI information assistant called “Baby Legal Bot” that helps users understand regulatory jurisdictions; an AI research assistant for lawyers with inline citations; an AI deliberation tool called “Brainstorm” that uses different foundation models to generate analytical diversity; and an automated document drafting module generating commercial documents within seconds while including references to current laws.

Legaline also integrates an end‑to‑end workflow where clients post tasks, lawyers bid through a closed auction, negotiation and signing occur in an in‑app chat, and payment is held in escrow until completion. The company emphasises that its AI tools are trained exclusively on primary UAE legal sources and that user data is not used to train models.

3. Kash Patel and the normalisation of AI in law enforcement

FBI Director Kash Patel has become a prominent advocate for AI in law enforcement. In a recent interview, he claimed that AI was not used at the FBI until the current administration and that he is now “using it everywhere.”

Patel credited AI with stopping a potential school shooting in North Carolina and another in New York by quickly analysing tips and triaging threats. He also said AI has enabled the FBI’s National Threat Operations Center to sift through thousands of weekly tips and to “pop fingerprints immediately” in its criminal databases.

According to Patel, the FBI has embedded major tech companies into its infrastructure to build AI tools and modernise its systems.

While Patel portrays AI as a force multiplier, his comments illustrate a broader trend which is the rapid integration of AI into law enforcement without broad public deliberation.

The ability to triage tips and match fingerprints quickly can help prevent crimes, but it also raises concerns about due process, bias, accountability and transparency. When AI tools screen large volumes of data, there is a risk of false positives that can lead to unwarranted scrutiny.

Many predictive policing systems have been shown to reflect and magnify existing biases in data.

Critics worry that normalising AI in law enforcement could entrench opaque surveillance techniques and reduce human oversight.

The U.S. legal framework has not kept pace with AI’s proliferation in policing. Unlike sectors such as healthcare or finance, law enforcement often employs AI without sector‑specific regulation. Chatrie v. United States, discussed above, indicates how new surveillance methods challenge constitutional protections.

Democratic institutions must examine whether traditional oversight mechanisms suffice. Questions include: should AI‑generated leads be subject to judicial review before action is taken? How can agencies ensure that training data does not encode racial or socio‑economic bias? How should AI vendors be vetted for law enforcement use? Policy makers must engage with these issues to avoid normalising AI surveillance without legal safeguards.

Latest Opportunities

Remote jobs for lawyers

1. Enterprise Account Executive DACH, Legal Tech, €80k base + 100% OTE (Remote Germany): DACH region Enterprise Account Executive to lead enterprise sales strategy, contract negotiations, and customer growth (find out more).

2. Counsel, Applied Legal Research (Remote Singapore): Centari is hiring a transactional lawyer for its Applied Legal Research team to help develop AI tools for corporate legal work, combining expertise in corporate transactions, legal analysis and generative AI product development (find out more).

3. Legal Expert (Remote UK): A remote‑based legal expert role advertised by a UK consultancy seeks professionals with expertise in technology law and digital regulation (find out more).

4. Junior/Mid AI Legal Specialist, EverAI (Remote UK): This role is aimed at lawyers or legal specialists interested in AI governance and product support (find out more).

Conferences, fellowships, scholarships, and calls for papers

5. Full scholarship (PhD & Postdoc): AI, medical law and ethics (The University of Amsterdam, Netherlands): Two fully funded PhD positions and one postdoctoral role in AI, medical law and ethics. Projects cover generative AI in healthcare and fairness in medical AI. Applications closes 18 May 2026, with a September 2026 start (find out more - PhD and Postdoc).

6. Call for papers on Quantum Technology and Law, Leiden Law School: Leiden Law School invites chapters for an edited volume on quantum technology and law. Topics include legal, ethical, social, and regulatory issues linked to quantum technology. Abstracts should be 150 to 250 words, and chapters should be around 8,000 to 10,000 words. (find out more).

7. Free Microsoft Virtual Training Day: Introduction to Azure (18 May 2026): Microsoft’s free virtual event offers a foundational overview of cloud concepts, core Azure services across compute, networking, storage and identity and demonstrates AI tools. Participants who complete the session receive a 50 percent discount on the Azure Fundamentals certification exam (find out more).

8. AI, Justice and the Rule of Law (Free Course): UNESCO and the University of Oxford are offering a free self‑paced online course launched on 27 April 2026 to equip judges, lawyers and students with an understanding of how AI interacts with human rights and legal reasoning (find out more).

This edition of weekly briefing highlight a growing problem between innovation and regulation.

Europe is attempting to reform its AI Act to balance technological progress with fundamental rights, while Greece proposes embedding AI principles into its constitution. Canada’s regulators are reprimanding AI developers that privacy obligations apply even when data is publicly available.

AI is gradually becoming pervasive, but we must insist that it serves human dignity, accountability and the rule of law.

Leave your comments to join the conversation.

Disclaimer

This newsletter is provided for informational and educational purposes only. It does not constitute legal advice, and readers should not rely on it as a substitute for professional legal counsel. The views expressed are those of the authors alone.