Technology Law Weekly Briefing: Newsletter Issue 92

Newsletter Issue 92: The EU AI treaty, legal AI tools, chatbot liability investigations, digital asset property rulings, regulatory developments, and job opportunities in AI governance and tech law.

Technology law is entering a period in which institutional responsibility cannot be deferred to innovation narratives or voluntary guidelines. Governments are writing treaties on AI governance, prosecutors are testing the limits of liability for chatbot outputs, courts are treating online gaming assets as property, and regulators are scrutinising algorithmic markets. Legal practice itself is also changing as firms integrate AI into core workflows. This briefing examines these developments and considers what they reveal about accountability, authority, and risk in our digital systems.

Newsletter Issue 92

This week in Technology Law:

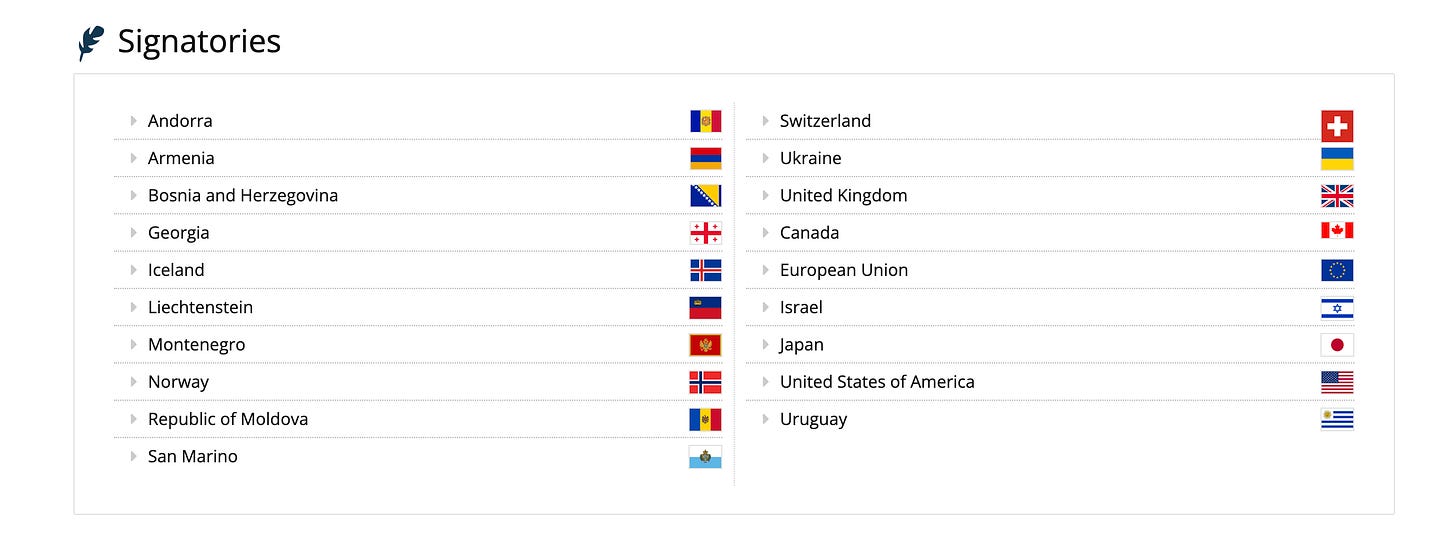

1. EU signature of the Council of Europe Framework Convention on Artificial Intelligence: The European Parliament has backed the EU’s signature of the first international legally binding treaty dedicated to AI governance, human rights, democracy, and the rule of law.

2. Anthropic and Freshfields agree on a deal to create legal AI tools: The partnership shows how major law firms are moving from testing AI tools to building them into research, contract review, drafting, and internal legal operations.

3. Serious criminal investigation against OpenAI and ChatGPT: An investigation that raises an important question for AI governance: When can chatbot output become relevant to criminal responsibility, platform design, and safety duties?

4. R v Lakeman [2026] EWCA Crim 4: The Court of Appeal has settled that digital assets in a computer game are property that can be “stolen” under criminal law.

5. Job opportunities in tech law and governance.

Lead Story

This is a significant milestone because the Council of Europe describes the convention as the first international legally binding treaty in AI governance.

This treaty is designed to make sure that activities across the AI lifecycle remain consistent with

Human rights

Democracy, and

The rule of law.

Parliament gave its consent to the EU’s signature with 455 votes in favour, 101 against, and 74 abstentions. The next step is for the Council to conclude the agreement.

The treaty applies to AI-related activities carried out by public authorities and private actors acting on their behalf. Private sector actors are also expected to address AI-related risks in line with the convention’s objectives, either by directly applying the convention’s obligations or by providing equivalent protection.

The treaty does not replace the EU AI Act. It sits beside it.

The Parliament’s own explanation states that the EU AI Act and other EU laws already provide a higher and more detailed level of protection within the EU internal market. The treaty establishes a broader international baseline for transparency, documentation, risk management, oversight, fundamental rights, democratic safeguards, and rule-of-law values.

The text of the treaty is built around the idea that AI systems should not be treated only as products, software, or business tools, while recognising that they can affect dignity, autonomy, equality, privacy, public institutions, and democratic participation.

The treaty, therefore, requires states to adopt or maintain legislative, administrative, or other measures to give effect to its provisions. Those measures may be specific to particular sectors or horizontal across technologies.

This matters because international AI governance is often discussed as if it is only about voluntary principles. This treaty takes a firmer route by providing contracting states with a common legal framework for AI risks without requiring every country to adopt the EU AI Act. That is important for states that want human rights-based AI governance, but do not have the same regulatory position as the EU.

Lawyers advising public bodies, technology sellers, and regulated businesses will need to ensure compliance. Procurement, due diligence, contract drafting, and AI governance policies will need to ascertain whether the use of AI affects rights, public accountability, and democratic values. AI policy documentation may become more important because transparency and oversight cannot work without reliable records.

Regulators will also have a clearer basis for cooperation. AI systems cross borders, and many of the companies developing or deploying them operate globally. This uniform treaty framework will help regulators discuss risk, compliance, and institutional responsibility using shared concepts. That does not remove national differences, but it gives regulators a firmer starting point.

Companies should not treat the convention as a distant public law instrument. Any business building AI tools for government, justice, education, healthcare, public services, security, finance, or civic participation should assume that human rights review will become a more visible part of the buying and supervision process. Strong internal records, testing, escalation procedures, and clear accountability lines will become easier to defend than vague statements about responsible AI.

The EU’s support for the Framework Convention positions AI governance within an international legal framework grounded in human rights, democracy, and the rule of law.

Technology Law Tracker: Other Relevant Updates

1. Freshfields and Anthropic partner on developing legal AI tools

Freshfields and Anthropic have agreed to work together on new AI applications for legal services. It was reported that Freshfields will collaborate with Anthropic’s legal team to develop tools for legal and market research, contract review, document drafting, and automation of internal business services workflows. Freshfields will also receive early access to future Anthropic models and products, although no financial terms were disclosed.

The development also comes at a time when courts are paying closer attention to AI errors in legal documents. Recently, there has been a rise in AI-generated inaccuracies in court filings, including inaccurate citations and other errors.

Legal AI is judged not only by speed, but also by reliability, auditability, and professional accountability. A tool that drafts faster but imposes verification burdens may save time in one place while creating risk in another.

2. OpenAI and ChatGPT investigated after deadly shooting

Florida Attorney General James Uthmeier has launched a criminal investigation into OpenAI and ChatGPT after a 2025 shooting at Florida State University that killed two people and injured six others.

The suspect was charged with multiple counts of murder and attempted murder, and the state is examining whether OpenAI bears criminal responsibility for ChatGPT’s alleged role in the incident. The Office of Statewide Prosecution has subpoenaed OpenAI for information and records.

Uthmeier said at a press briefing that the chatbot advised the shooter on the type of gun to use, ammunition compatibility, and whether a gun would be useful at short range.

OpenAI’s position, as reported by Reuters, is that the shooting was a tragedy, but that the company had no responsibility. The company also said it identified an account believed to be associated with the suspect and shared information with law enforcement.

It added that ChatGPT provided factual responses based on broadly available public information and did not encourage or promote illegal or harmful activity.

This investigation examines how prosecutors may view AI systems that produce information later linked to violent conduct. Criminal law usually focuses on human intent, conduct, causation, and knowledge.

Can AI be guilty of a crime? Read our newsletter on this delicate matter.

Applying those ideas to a chatbot raises difficult issues. An AI system can generate a harmful answer without having human intent. A company can design safety layers, but users may still ask dangerous questions in ways that are not always easy to detect.

The case also highlights the difference between public information and actionable assistance. Many facts about weapons, chemicals, cyber tools, and other dangerous subjects exist online. The legal question is whether an AI system changes the risk by making such information easier to access, combine, personalise, or apply. That question will remain central to AI safety discussions.

Which liabilities exist against LLMs offering misleading advice?

Companies offering general-purpose AI tools should treat this development as a warning that safety design will be examined after serious harm occurs. It will not be enough to say that a user misused an AI system. Prosecutors, regulators, victims, and the public will ask what safeguards existed, how they were tested, what the company knew about foreseeable misuse, and how the system responded to high-risk prompts.

This is also a reminder that AI governance needs incident response planning. Companies should be prepared to preserve records, cooperate with lawful investigations, review model behaviour, and communicate carefully. The legal outcome remains uncertain, but the governance lesson is already clear.

3. UK courts and regulators consider clearer rules for AI-generated legal documents

The UK Civil Justice Council has been consulting on whether new procedural rules are needed for the use of AI in preparing court documents, including pleadings, witness statements, and expert reports.

Judicial concern about AI hallucinations, including false authorities, inaccurate case references, and wasted court resources. It also refers to recent cases in which AI-generated or AI-assisted legal material caused problems before the courts.

The interim report suggests that where a statement of case bears the name of the legal representative taking professional responsibility, further AI-specific rules may not be needed. The report raises similar points for skeleton arguments and advocacy documents, emphasising professional responsibility as the key safeguard.

Witness statements are treated differently. The report notes that witness evidence should generally be in the witness’s own words and from personal knowledge. It proposes that where AI has been used, other than transcription, the legal professional should include a declaration that AI has not been used to generate content, including alteration, embellishment, strengthening, dilution, or rephrasing of the witness’s evidence.

The judiciary is also examining wider questions about AI, legal professional privilege, and confidentiality. In a speech on legal professional privilege in the age of AI, the Chancellor of the High Court noted that public AI systems may raise confidentiality concerns.

The speech discussed a tribunal decision holding that uploading confidential documents to an open-source AI tool such as ChatGPT can place that information in the public domain, breach client confidentiality, and waive privilege.

This matters for every lawyer, not only litigators. AI can help draft, summarise, translate, and organise material, but it cannot remove professional responsibility. Courts are likely to focus less on whether AI was used and more on whether the final document is accurate, honest, properly verified, and consistent with duties owed to the court.

Legal professionals should have a clear verification process. They should know which AI tools are approved for use in their jurisdiction, which data must not be entered, how outputs are checked, and how errors are recorded. Litigants-in-person may also need clearer public guidance because AI tools can make legal arguments look more polished without making them legally sound.

Case of the Week

Case: R v Lakeman

Citation: [2026] EWCA Crim 4

Facts

R v Lakeman concerned virtual gold pieces in the online game Old School RuneScape. The gold pieces were recorded in a ledger maintained by Jagex, the game’s developer and publisher. The terms and conditions stated that the gold pieces could be redeemed only inside the game, and Jagex could delete them or anything purchased with them. Sale or gifting in the real world was prohibited.

The defendant was an employee of Jagex. He was accused of hacking into player accounts, transferring a large number of gold pieces to accounts under his control, and selling them for real-world currency. The alleged theft involved around 705 billion gold pieces, valued at £543,123.

Legal issue

The legal issue was whether virtual in-game currency could constitute property capable of being stolen under section 4 of the Theft Act 1968 (UK). This was not a simple question because the gold pieces existed inside a game environment, were subject to contractual restrictions, and could be modified or deleted by the game operator.

The defence argument was helped by the fact that the game rules did not allow real-world trading. Jagex also retained significant control over the game environment. The prosecution’s case depended on persuading the court that those features did not render the gold pieces property for the purposes of theft.

Decision

The Court of Appeal held that the gold pieces were property capable of being stolen for the purposes of section 4 of the Theft Act 1968. The court stressed that the definition of property in criminal law differs from that in private law. The court also discussed other related issues in digital asset property, including the idea that digital assets can be functional things distinct from the underlying code.

The court adopted a wider approach to property for theft purposes. The court considered whether the thing was freely bought and sold and capable of dishonest dealing that deprives the owner of its benefit. It allowed the prosecution’s appeal on the preliminary issue and held that the virtual gold pieces were property capable of theft.

The relevance of this judgment

Digital assets now carry real social and economic value. Games, virtual worlds, blockchain, digital collectables, token-based platforms, and online marketplaces all involve items that may be controlled by code, governed by terms, and valued by users. Criminal law cannot avoid these assets simply because they are intangible or platform-based.

The decision is especially important for gaming companies. Terms and conditions may say that in-game assets cannot be sold outside the game, but that does not necessarily prevent a court from treating those assets as property in a criminal context. Platform operators should therefore think carefully about account security, internal access controls, employee permissions, audit trails, and user protection.

Is crypto personal property? Read our newsletter to find out more:

The court’s reasoning adds to a growing body of judicial reasoning about how law should treat digital things that have practical utility, scarcity, control, and market value. It does not answer every private law question about ownership, transfer, insolvency, or platform liability, but it gives courts and lawyers another important reference point.

The law is becoming more comfortable with the idea that digital value can be legally meaningful. Users may consider a virtual item valuable even if it exists on a controlled platform. Courts are beginning to take that experience seriously when dishonesty, deprivation, and market value are present.

Other Developments in Technology Law:

1. Investigation into music streaming platforms over alleged undisclosed promotion

The Texas Attorney General has launched an investigation into Spotify, Apple Music, Pandora, Amazon Music, and YouTube Music over alleged payola-type arrangements. The office says it is examining whether streaming services accepted undisclosed payments to promote certain songs, artists, or content through playlists, recommendations, or ranking systems. Civil Investigative Demands have been issued to the companies.

2. UK chatbot offence proposal withdrawn before debate

A proposed amendment to the Crime and Policing Bill, which has now been withdrawn, would have created offences related to AI chatbots that produce illegal or harmful content for children, or that use certain deceptive or exploitative chatbot designs.

The amendment also included duties for risk assessment, mitigation, transparency reporting, and potential criminal penalties. The amendment was withdrawn before debate, so no decision was taken on it.

3. Dutch financial regulator highlights AI risks in capital markets

The Netherlands Authority for the Financial Markets has published a report on AI in capital markets.

The regulator says AI can improve speed and reduce costs, but it can also affect price formation, amplify market vulnerabilities, and create new risks where trading models rely on similar data and objectives. It also warns that false or manipulated information could affect models that rely on news, sentiment, and social media.

Latest Opportunities

Jobs, conferences, fellowships, and calls for papers

1. Junior/Mid AI Legal Specialist, EverAI (Remote UK): This role appears aimed at lawyers or legal specialists interested in AI governance and product support (find out more).

2. AdTech Lawyer, Axiom (Remote US): Axiom is hiring an AdTech Lawyer to advise on digital advertising agreements, data sharing, campaign data flows, and marketing compliance (find out more).

3. Smart Contract Legal Architect, Loti AI (Remote US): Loti AI is hiring for a legal engineering role focused on likeness rights, on-chain rights enforcement, Solidity, and digital identity. This may interest lawyers who combine intellectual property, technology law, and smart contract literacy (find out more).

4. Business Teacher, Postsecondary AI Training, Alignerr (Remote Australia):

This opportunity appears to involve teaching business for AI training, with some connections to other disciplines such as privacy and data protection (find out more).

5. Lawyer, Global Licensing, Coins.ph (Remote Hong Kong): Coins.ph is hiring a lawyer to lead licensing work across payments, remittance, e-money, and virtual asset services. The listing seeks experience with financial regulation, licensing applications, regulators, external counsel, and multiple jurisdictions (find out more).

6. Call for papers on Quantum Technology and Law, Leiden Law School: Leiden Law School invites chapters for an edited volume on quantum technology and law. Topics include legal, ethical, social, and regulatory issues linked to quantum technology. Abstracts should be 150 to 250 words, and chapters should be around 8,000 to 10,000 words. (find out more).

Conclusions

The common thread in this briefing is accountability. AI is becoming part of law, finance, public administration, entertainment, and criminal investigation, so legal systems are now asking who takes responsibility when automated systems affect people.

The most useful conversation is whether AI use can be explained, checked, and governed with sufficient care to preserve trust.

Disclaimer

This newsletter is provided for informational and educational purposes only. It does not constitute legal advice, and readers should not rely on it as a substitute for professional legal counsel. The views expressed are those of the Technology Law e-Newsletter and do not necessarily reflect the views of any affiliated institution.