Council of Europe Sets New Standards for Online Safety and Empowerment

Newsletter Edition 90: Council of Europe’s new internet safety framework demands stronger platform accountability while raising controversial questions about state influence over online speech.

The Council of Europe’s new recommendation on online safety presents an ambitious attempt to reconcile freedom of expression with the governance of digital platforms. The document proposes stronger expectations on states and platforms while insisting on human rights safeguards. Additionally, the framework raises difficult questions about regulatory reach, platform accountability, and the risk that protective measures may legitimise the over-censorship of online discourse.

Newsletter Edition 90

TL;DR

This update presents a comprehensive Council of Europe recommendation and an explanatory memorandum adopted in April 2026 that aims to balance online safety with freedom of expression. It recognises that digital platforms have become central to public communication and must respect human rights while empowering users.

The recommendation identifies distinct categories of online risk, including harm to personal or community well-being, threats to democratic processes and systemic risks arising from technology. It stresses that interventions should be evidence-based, proportionate and grounded in law.

States are urged to create a safe and inclusive digital environment through education, transparency and support for independent media. They should avoid introducing vulnerabilities into online services or imposing broad monitoring obligations.

Online platforms are expected to embed user safety and empowerment into their design, governance and operations. Those of significant influence must conduct risk assessments and consult stakeholders before making changes.

Content creators are reminded of their responsibility to contribute to a healthy public discourse with accuracy and integrity. Transparency about monetisation and adherence to ethical standards are highlighted.

The recommendation introduces user empowerment duties for platforms, covering design, transparency, fair process and collective action. It emphasises age assurance mechanisms for children and access to research data for independent researchers.

Introduction

The digital environment has become central to our social and civic lives. People gather news, express opinions, learn, play and work through online platforms. The Council of Europe, aware of the benefits and dangers of this transformation, adopted Recommendation CM/Rec(2026)4 on 8 April 2026 to guide member states toward safe and empowering online environments. This recommendation acknowledges that while the internet enhances freedom of expression, it also exposes users to harassment, misinformation and other risks that can undermine democracy. It seeks to protect individual and collective rights without sacrificing the openness of the digital realm.

The recommendation builds on previous Council of Europe instruments on internet freedom and the responsibilities of internet intermediaries. The explanatory memorandum emphasises that the right to freedom of expression protects speech that may offend, shock or disturb.

The recommendation proposes principles and measures for states, platforms and content creators to address online risks while enhancing user autonomy.

Understanding online risks

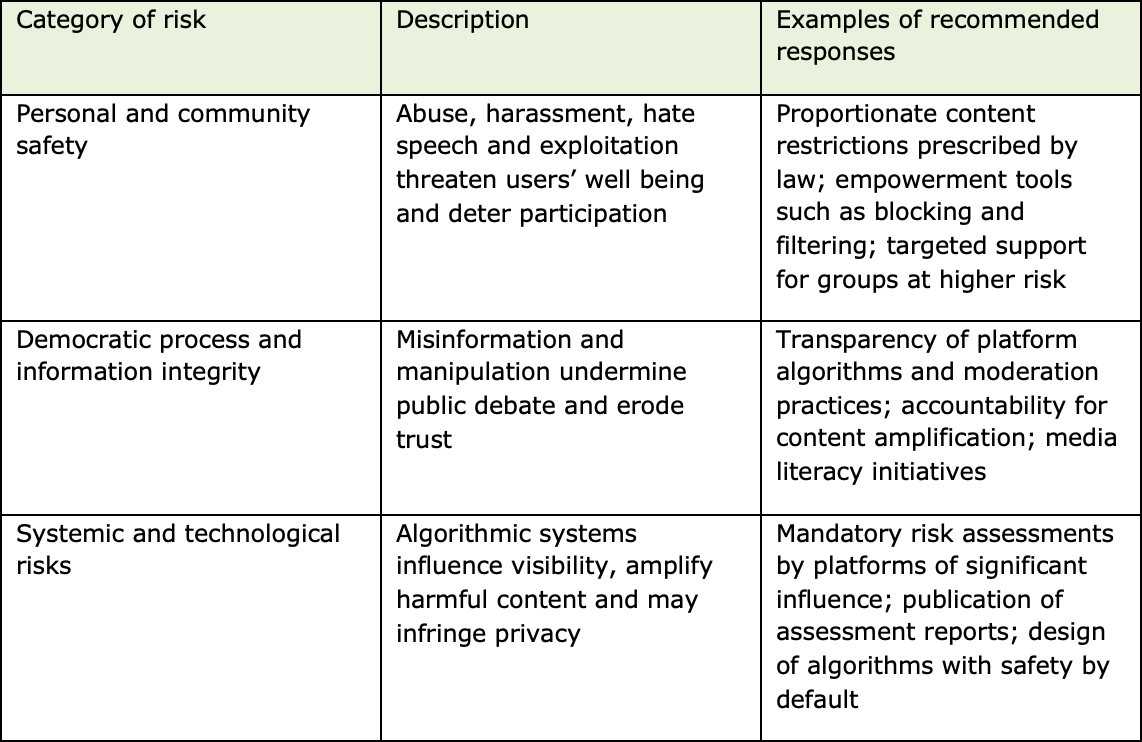

The recommendation categorises online risks that affect freedom of expression into three groups:

Personal and community safety risks: Users may encounter abuse, harassment or content that threatens their well-being. This includes hate speech, violence and exploitation. Such risks can deter individuals from participating in public discourse, leading to self‑censorship and exclusion.

Threats to democratic processes and information integrity: Misinformation, targeted disinformation campaigns, and manipulation of public debate undermine informed decision-making. These threats erode trust in institutions and polarise societies. Election interference and conspiracy narratives can weaken democratic legitimacy.

Systemic risks from the design and operation of platforms: Algorithmic systems can influence the visibility of content, amplify harmful material or suppress minority voices. Platform design choices, driven by engagement metrics, may prioritise sensational content, leading to echo chambers and exposing users to harmful material. Artificial intelligence tools that produce or moderate content introduce new risks and amplify existing ones.

The memorandum explains that certain groups face heightened risks online: children, women and girls, people with disabilities or minority identities, migrants and content creators such as journalists and activists. Online abuse targeted at these groups often extends into the physical world, reinforcing social inequalities. When freedom of expression is curtailed by intimidation or discrimination, public debate suffers.

Principles for states

The recommendation stresses that states should foster an enabling online environment that is:

Safe,

Inclusive, and

Pluralistic.

This involves measures both online and offline. States should develop comprehensive strategies that address underlying societal inequalities that lead to online abuse. Education and media literacy are central to empowering users; states are encouraged to invest in digital citizenship initiatives and strengthen media diversity.

States must avoid actions that compromise online safety. They should not introduce vulnerabilities into technical systems that protect privacy. Enhanced surveillance or general monitoring obligations are discouraged, as they threaten freedom of expression and could lead to the over-removal of lawful content. Instead, interventions should be evidence-based, transparent and proportionate. When developing regulations, governments should consult a wide range of stakeholders, including civil society and affected communities.

The recommendation outlines that any content rule must be prescribed by law, pursue a legitimate aim and use proportionate means.

States should clearly identify what content is illegal or regulated, ensuring legal certainty and preventing arbitrary enforcement. Blocking entire services or domains is considered a severe interference with freedom of expression and should occur only under strict judicial oversight.

Responsibility for enforcing content restrictions cannot be transferred wholesale to private companies. States remain ultimately accountable for human rights protection. When authorities instruct platforms to remove content, platforms should inform users so they can challenge the decision. Legal frameworks must avoid compelling intermediaries to monitor all user content.

Responsibilities of platforms

Online platforms serve as gatekeepers to public discourse. The recommendation acknowledges that they play a central role in facilitating expression and, therefore, bear responsibilities commensurate with their impact.

Platforms should integrate user safety considerations into their design and governance. Design decisions should not favour sensationalism over safety or pluralism.

Platforms of significant influence – those with large user bases and substantial impact on public communication – are expected to conduct risk assessments before implementing changes. These assessments should consider human rights impacts, involve stakeholder consultation and be published in a way that the public can understand. When risks are identified, platforms must implement mitigation measures before introducing new designs. This approach encourages transparency and accountability in corporate decision-making.

Platforms are encouraged to employ staff knowledgeable about local contexts and languages, enabling them to understand specific risks in different regions. They should pay special attention to groups facing higher risks, such as children, women and minorities. Collaboration with user groups and civil society can inform more responsive policies.

One notable principle is the graduated and differentiated approach. Smaller platforms should not be overburdened by obligations suited for very large services. Conversely, platforms with significant influence should be subject to heightened duties that reflect their power and reach.

Content creator responsibilities

The recommendation recognises content creators as an essential part of the digital public sphere. It calls on them to uphold accuracy, fairness and integrity.

Creators who reach large audiences or claim professional expertise have a heightened duty to act in good faith.

Transparency is vital: creators should disclose their sources of income and clearly label sponsored content. Corporate creators should be transparent about ownership structures.

Parents or legal representatives of children acting as content creators have additional obligations. They must prioritise the child's best interests and preserve their dignity and safety.

These provisions acknowledge the rising number of child influencers and aim to protect them from exploitation.

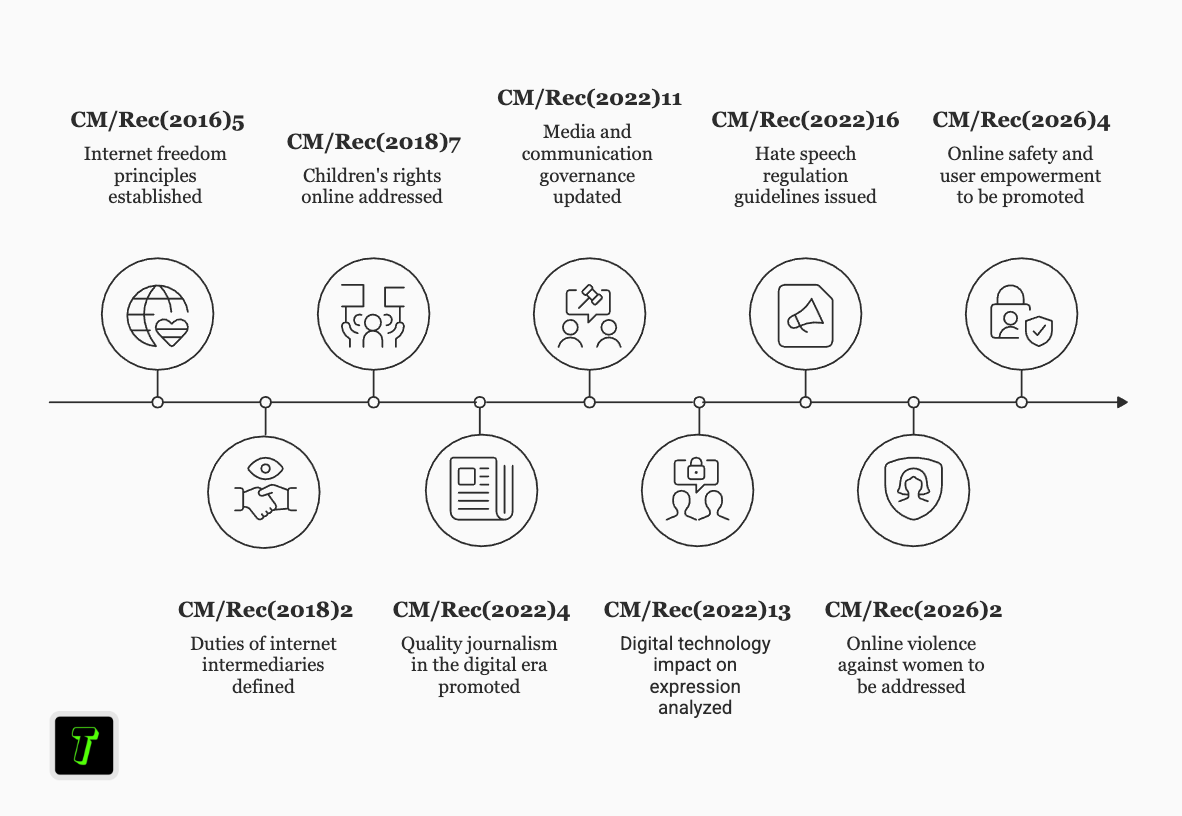

Timeline of Council of Europe digital rights instruments

The timeline below illustrates how the Council of Europe has progressively addressed digital rights and online safety through a series of recommendations.

It places Recommendation CM/Rec(2026)4 in a broader context of regulatory development.

Events are plotted chronologically from 2016 to 2026.

Legal frameworks: content rules, intermediary liability and platform accountability

The recommendation divides legal frameworks into three pillars:

Content rules,

Intermediary liability rules, and

Platform accountability and user empowerment rules.

This structure recognises that different types of interventions require different approaches.

Content rules

Content rules govern what kind of expression may be restricted and under what circumstances. They must be clearly prescribed by law, pursue a legitimate aim and remain necessary and proportionate.

The recommendation reiterates that content lawful offline should generally be lawful online. When restrictions are necessary, the law should specify whether content is illegal (fully prohibited) or legal but regulated (subject to access or visibility limitations). The degree of risk severity and its imminence should guide the level of restriction.

Member states are encouraged to regularly review their online content laws to ensure clarity and relevance in a changing digital context.

Enforcement should, in principle, occur through formal orders by judicial or independent authorities, with users and intermediaries having access to effective remedies. Platforms may adopt additional rules through terms of service, but these should be transparent and consistent.

Intermediary liability rules

Excessive liability can turn intermediaries into de facto censors and encourage over-removal of lawful content. States should therefore avoid imposing disproportionate liability on intermediaries for user-generated content.

Liability may be appropriate when intermediaries fail to act expeditiously upon becoming aware of illegal content, provided that notice procedures are transparent and effective. The conditions for removal, including time frames, should be differentiated according to the nature and seriousness of the content.

When platforms enforce content restrictions in accordance with State orders, they should provide sufficient information to enable users to challenge those decisions. This ensures accountability and prevents arbitrary or discriminatory enforcement.

Platform accountability and user empowerment rules

Platform accountability rules address systemic duties that go beyond content moderation. They require platforms to design services with user safety and empowerment in mind. States should legislate to require platforms of significant influence to conduct risk assessments and publish documentation on their human rights impacts. Regulatory authorities overseeing these frameworks must be independent, well-resourced and subject to judicial review.

States are encouraged to share responsibility by involving non-state actors such as researchers, user groups and out-of-court dispute settlement bodies. These actors should operate under transparency and accountability mechanisms. By decentralising oversight, the recommendation envisions a collaborative approach to safety.

User empowerment measures

At the heart of the recommendation is the belief that empowering users can mitigate online risks and foster resilience. User empowerment duties are divided into four categories:

Design-related,

Transparency-related,

Fair process, and

Collective action.

Empowerment by design

Platforms should enable users to personalise their online experience. Automated systems for organising and recommending lawful content should allow users to opt out, hide certain categories of content or block other users.

Third-party labelling tools can help users make informed choices about content. Platforms of significant influence may need to open their systems to third-party tools that assist in personalisation, subject to accountability requirements.

Design must also promote inclusivity. Platforms should address accessibility barriers faced by persons with impairments and ensure that safety tools are usable by all.

Age assurance systems are required to protect children from age-restricted content, but they must respect children's privacy and evolving capacities. The recommendation cautions against excluding children from online spaces or exacerbating discrimination.

Transparency

Users should understand how platforms organise content and moderate behaviour. Platforms are urged to explain their algorithmic systems for content organisation and curation. They should publish statistics on content moderation decisions and qualitative reports on the accuracy of automated moderation tools.

Transparency extends to advertising: platforms must disclose the identities of advertisers, the targeting techniques used, and spending. They should reveal how user-generated content is monetised and the principles used to allocate resources to creators.

Independent researchers play a crucial role in uncovering systemic issues. The recommendation emphasises that researchers should have access to platform data in a secure, legal, and privacy-compliant manner. Access may be granted through external vetting when personal data is involved. Researchers should also be allowed to use platforms to study social phenomena.

Procedural rights and fair process

Platforms must be clear about the contractual policies and rules that govern their services. Changes to these rules should be notified in advance and explained in an accessible language.

When platforms make content moderation decisions, they should notify affected users, explain the grounds for the decision and provide avenues for appeal. Appeals should include independent oversight mechanisms, such as out-of-court dispute-resolution bodies.

Collective action

Users should be able to report breaches of platform rules and legal restrictions easily. Platforms should provide feedback on follow-up actions. Users submitting notices about potentially illegal content should also receive feedback and have the right to appeal decisions.

The recommendation encourages recognising professional flaggers and user groups who can act as independent experts and advocate for user interests.

Categories of online risks and recommended responses

The significance of this recommendation

The Council of Europe’s new recommendation is both an evolution and a consolidation of existing human rights principles applied to the digital space.

It acknowledges the power asymmetry between large platforms and individual users, and addresses this by imposing differentiated responsibilities based on size and impact.

This approach recognises that uniform regulations can stifle innovation or overwhelm smaller actors, while leaving major services inadequately regulated.

The recommendation seeks to prevent harm at the systemic level rather than react to individual incidents.

The recommendation’s focus on empowerment is notable. It goes beyond protection to encourage users to actively curate their digital environment. Personalisation tools, third-party labels and accessible interfaces are intended to give users agency over what they see and engage with.

This reform reflects an understanding that safety cannot be achieved solely through content removal. A pluralistic digital sphere requires that users are equipped to navigate diversity and dissent without being overwhelmed by harm.

Another significant element is the recognition of intersecting vulnerabilities. Women, children and marginalised communities often bear the brunt of online abuse. The recommendation highlights the need for age-appropriate systems and inclusive design to ensure that safety tools serve all users. States and companies can address the long-standing inequities that persist both online and offline.

Transparency provisions also have far-reaching implications. Requiring platforms to disclose how algorithms work, how moderation is conducted and who funds advertising opens space for public scrutiny. It empowers researchers to study the impacts of digital platforms and fosters trust by shedding light on practices that have traditionally been opaque. However, implementing meaningful transparency will require cooperation between regulators, platforms and civil society.

The recommendation aligns with global trends towards regulating digital services. The European Union’s Digital Services Act and the United Kingdom’s Online Safety Act 2023 similarly impose obligations on platforms to assess and mitigate risks.

The Council of Europe’s focus on human rights provides an additional layer of normative guidance, emphasising the need to preserve freedom of expression. As such, this recommendation may influence future national legislation and international norms.

This newsletter includes references and links to external sources for informational and educational purposes only. Inclusion of these sources does not constitute endorsement, approval, or verification of their views, accuracy, or conclusions. Responsibility for the content of the referenced materials remains solely with the original authors and publishers. Our newsletter does not represent any legal advice.

What a fantastic write up. The recommendation is lengthy, and I haven't gotten around to reading it in its entirety, but which groups would you say are identified as facing heightened risks in online environments?